Introduction

With the advent of new mediums and technologies, writing adapts.[1] Whether clay, parchment, or screen, a writer’s materials affect word choice, prose, and style.[2] These linguistic shifts are natural, making language more straightforward and accessible.[3]

Such a shift is occurring in legal practice.

Email is now a practicing lawyer’s primary means of communicating legal analysis.[4] Rejecting the cost, inefficiencies, and formalities of the twentieth-century memorandum,[5] clients and supervisors now demand documents that were once ten-plus pages be concentrated into emails consisting of just a few paragraphs.[6] The traditional legal memorandum[7] has been succeeded by a quicker, leaner, and cheaper medium[8]: the e-memo.[9]

Despite some scholarly criticism, the rise of e-memos has not made fundamental legal writing skills obsolete or called for less rigorous legal analysis.[10] Quite the opposite. E-memos have heightened the need for attorneys to write crisp, clear, and concise prose quickly.[11] They have crystallized the importance of precise language while underscoring the difficulty of mastering fundamental writing skills.[12] After all, when a writer has even less space to make a point, every word must matter.[13]

As e-memos have grown in prominence, leaders in higher education simultaneously have pushed for law schools to create “practice-ready” graduates.[14] While it is unclear how effective law schools have been at preparing students,[15] legal writing courses have been at the forefront of the internal drive to ensure students can practice law.[16] Legal writing professors are uniquely positioned among first-year professors to create meaningful assignments based on tested, continually refined pedagogy and backed by a national repository of collaborative professionals.[17]

As legal writing continues to lead the first-year curriculum in preparing students for practice, most legal writing programs have made e-memo assignments a perennial exercise.[18] Moreover, scholars have recognized the importance of making e-memo assignments sufficiently rigorous and realistic.[19] It is imperative, therefore, that as we continue to assign e-memos to our students, our pedagogy remains dedicated to merging theory with practice.[20]

Following Kristen Tiscione’s groundbreaking 2006 study describing the significance of e-memos in practice,[21] this Article uses empirical research to contribute to the growing scholarship about e-memos.[22] Further, this Article provides concrete suggestions that promote a sound pedagogical approach for teaching e-memo drafting.[23] Part 1 reviews past scholarship on how to write e-memos and recognizes discrepancies in scholarly advice and textbook samples. Part 2 details a 2018-2019 study that asked over 100 attorneys to rank and evaluate sample e-memos. Part 3 provides the study’s results and statistical analyses. These data reveal a surprising and stark break in which sample e-memos attorneys preferred based on their age, the number of e-memos they write per month, and the size of their practice. Part 4 discusses additional research findings about attorneys’ e-memo preferences and suggests what these results mean for the legal writing classroom. Part 5 then recommends professors adopt substantive e-memo assignments that require in-depth analysis presented with pinpoint concision—what this Article terms “iceberg e-memos.”

1. E-Memos 1.0: A Literature Review

When e-memos first emerged, attorneys were left to build new structures with outdated blueprints.[24] But as law practice embraced the e-memo, textbooks,[25] articles,[26] and websites[27] laid a foundation of advice for working with this new medium. Most of this guidance remains consistent, with scholars agreeing the e-memo format must be “flexible.”[28] Rightly, scholars have emphasized that an organic writing process must reign over a prescribed product.[29] After all, legal writing pedagogy targets the current and future writing process, not redlining a product ex post facto.[30] There is no boilerplate memorandum that attorneys can fill out like a Mad Libs.[31] Still, however organic, the writing process must lead to a final product,[32] and that product should fit within a familiar genre that meets a reader’s needs and expectations.[33]

Therefore, while each e-memo should be flexible enough to fit its unique question presented, scholars have identified the following best practices for writing e-memos:

-

Start by restating the issue.[34]

-

Include an analysis of the law and its application to the facts.[35]

-

Rely more extensively on explanatory parentheticals than case illustrations.[36]

-

Conclude with the writer’s recommendations.[37]

-

Omit repeating the given facts.[38]

-

Keep the product to approximately one to two traditional pages.[39]

With the above points as a foundation, textbook authors frequently supplement their advice with samples illustrating their suggestions.[42] These samples are vital in showing the expectations of law practice, demonstrating logical organization, and turning abstract concepts into physical specimens students can dissect and internalize.[43] But for samples to be beneficial to the inquiring learner, they must follow best practices and be realistic.[44] Unfortunately, as e-memo pedagogy evolved, academic advice branched in diverse directions. Many samples reveal scholarly inconsistencies in how to write e-memos, with samples presenting differing levels of analysis for ostensibly comparable research questions.[45] Whereas some scholars advise a paradigmatic e-memo might include case illustrations[46] and counter-analyses,[47] others bluntly compare an e-memo to a “telegram”[48] and provide samples answering substantive legal questions with no reference to legal authorities.[49]

Every reader, every law firm, and every question is unique, but the contradictory samples are not merely a similar format stretching to meet distinctive needs; the differences in analytical depth from one text to another make deciphering a pattern among samples untenable.[50] Crucially, the substantive e-memo samples vary in how they apply facts to the law—“the magic moment of law practice” for which attorneys are paid.[51] Most texts only provide the cursory advice that there should be an application if needed.[52]

The texts and samples further disagree about when and how to cite authority. One article recommends that e-memos should “name important statutes, refer to important cases by shorthand, and mention the jurisdiction” but not be “clutter[ed] with formal, full-form citations.”[53] The article continues by recommending writers consider listing full citations at the end of the e-memo.[54] The samples from several other texts echo this advice, with some samples providing no citations and others omitting pinpoint citations or id. citations.[55] One text explicitly espouses this sentiment, suggesting writers include citations for “important authority” but “less frequently.”[56] In contrast, other scholars observe the importance of formal citations in their samples by citing after each sentence needing support.[57]

Samples even disagree on how and when to provide conclusions—with some samples giving the reader answers with reasoning while others offer brusque responses.[58] More importantly, although most texts agree a conclusion should be stated at the beginning of an e-memo,[59] one prominent text[60] contradicts this point, urging writers to question whether to place “bad” news at an e-memo’s conclusion.[61] Other texts do not overtly state this point but offer samples that hold off on answering the research question until the e-memo ends.[62]

Some discrepancies among scholars is to be expected in this new field of study. Moreover, each e-memo is unique to its research question and reader. But all legal analysis should be appropriately unique—including traditional memoranda.[63] When it comes to the widely employed e-memo, students and practitioners need more than varied samples; they need samples that follow best practices.[64] Sound pedagogy depends upon it: Without numerous realistic samples, asking students and new practitioners to write a flexible e-memo is making “an impossible request.”[65] The next stage in teaching e-memos then requires building on our pedagogical foundations with empirical evidence.[66]

2. Brief Overview of Findings and Study Methodology

To continue the ongoing process of updating and formalizing best practices for drafting substantive e-memos, the study set out to discover how practicing attorneys actually write. While I had anecdotal evidence and could continue to call friends in practice for advice, I wanted more concrete findings with which to build upon the existing foundation. Therefore, I designed this study with three objectives:

-

To provide attorneys with differing sample e-memos and ask which samples they preferred.

-

To find any correlations between respondent demographics and sample e-memo preferences.

-

To collect general information about e-memo habits.

As fully explained in Part 3, the results show that respondents generally favored the e-memo samples that elected depth over brevity. Additionally, respondents disliked the samples with short answers and little reasoning. Thus, it is not terse conclusions with few citations that best represent modern practice. Whether the sample answered a complex client question or a simple statutory issue, respondents wanted e-memos with clear reasoning and robust recommendations.

Yet, despite some unity in preferring the more detailed samples, respondents split in their predilections towards concision. Importantly, the study reveals a surprising division between attorneys who favor longer e-memo samples mirroring traditional memoranda and attorneys who favor concision in an e-memo that still provides a complete and well-reasoned answer. Attorneys who more often value e-memos that follow a traditional format include (1) those over 40,[67] (2) those who write less than four e-memos a month,[68] and (3) those who work in private firms of 50 or fewer attorneys.[69] By contrast, the attorneys who value concise prose and organization include (1) those 40 and under,[70] (2) those who write four or more e-memos a month,[71] and (3) those who work in private firms with over 50 attorneys.[72]

As detailed in Part 4, the study’s findings further provide information about respondents’ general preferences and habits regarding e-memos. Of particular interest for those teaching e-memos in the classroom, the responses show writing an effective e-memo means:

-

Including upfront answers with detailed reasoning;[73]

-

Having crisp applications with clear recommendations;[74]

-

Incorporating explanatory parentheticals;[75]

-

Following proper citation formatting;[76]

-

Relying on citation signals;[77]

-

Attaching applicable authority;[78]

-

Hyperlinking to authority;[79] and

-

Producing polished documents within 48 hours.[80]

Additionally, the study’s results demonstrate that even with the rise of e-memos, legal writing courses must continue to incorporate traditional memoranda assignments. Scholars have repeatedly remarked on the pedagogical benefits of teaching learners to write traditional memoranda, regardless of whether memoranda are still heavily used by attorneys.[81] The study confirms the need to teach traditional memoranda—not only because these writing assignments are valuable teaching tools but because traditional memoranda are indeed still part of private practice.[82]

Before providing more specifics about the results, however, it is necessary to discuss the study’s methodology. The next portion of this Article overviews the respondent recruitment process and respondent demographic information. The Article then details my methodology when creating the sample e-memos used in the study and compares and contrasts the samples.

2.1 Respondents

Respondents were a sampling of graduates from Indiana University Robert H. McKinney School of Law and the University of Missouri School of Law, plus a handful of practitioners from other schools who expressed interest in the survey.[83] The first participants identified included attorneys I personally know. The pool of respondents then expanded to attorneys in recent contact with IU McKinney’s Office of Professional Development, those who responded to a general call through the Indianapolis Bar Association, and those who contacted me after being notified about the survey by other respondents. One hundred thirteen attorneys participated.[84]

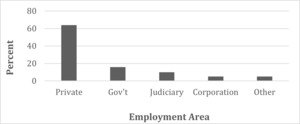

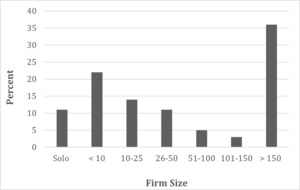

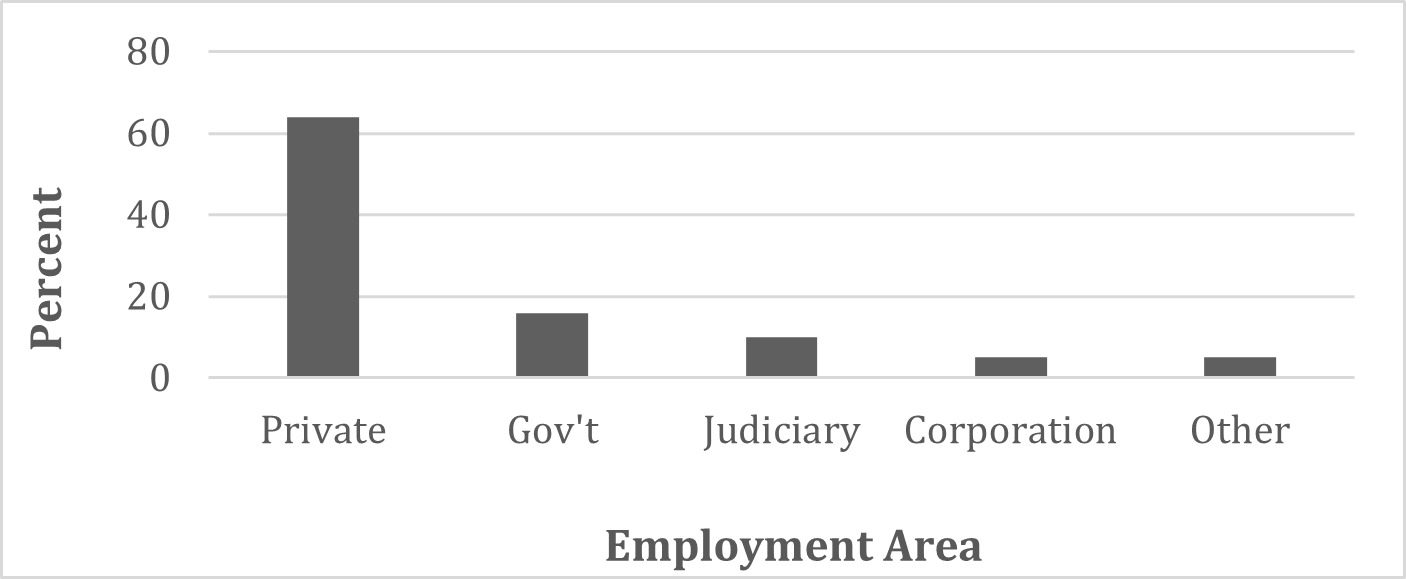

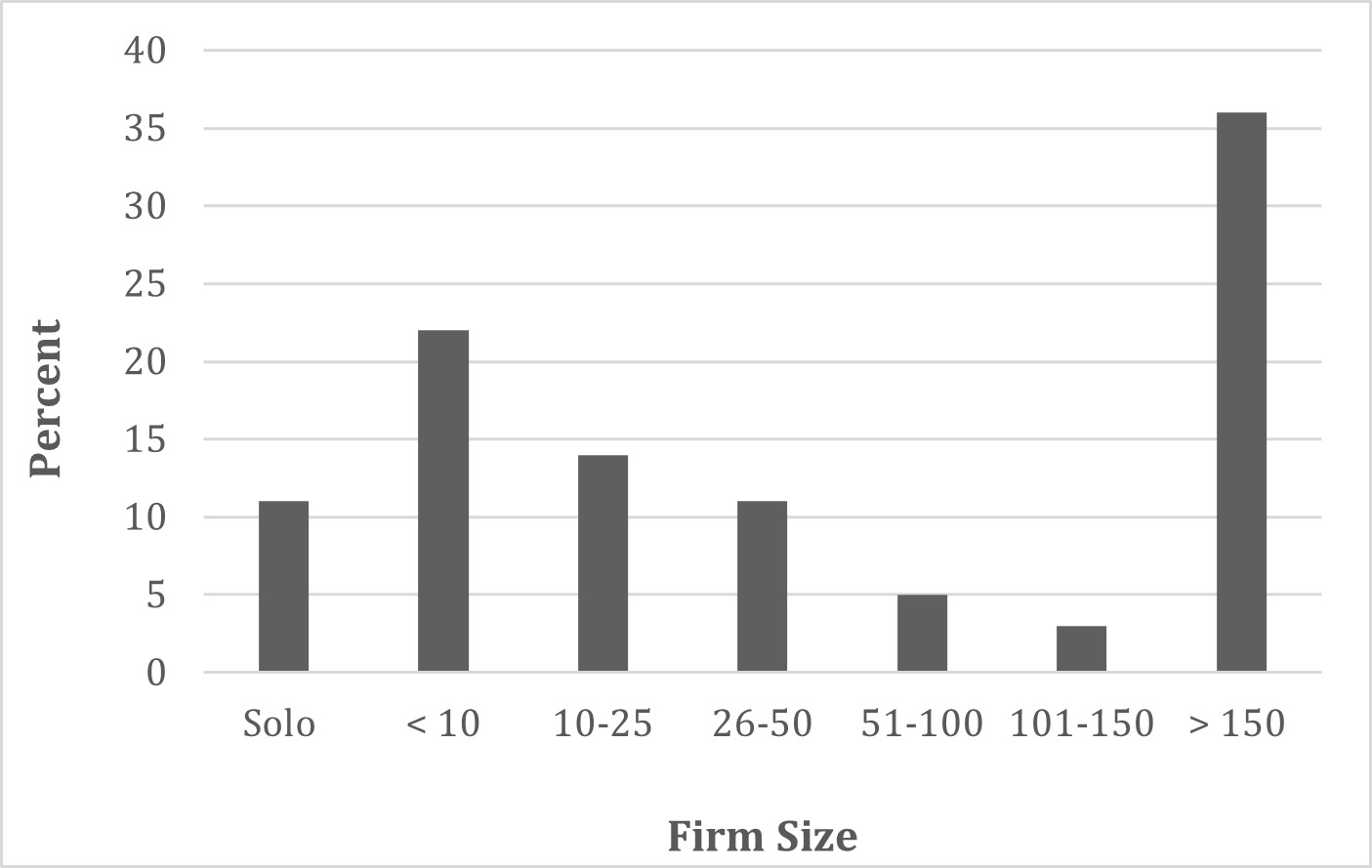

One hundred out of the 113 respondents identified where they were employed, with the majority of attorneys—64%—working in private law.[85] Sixteen percent worked in city, county, state, or federal government; 10% worked in the judiciary; 5% worked for a corporation; and 5% marked “other.”[86] Of those 64% of respondents working in private firms, the highest percentage—approximately 36%—worked in firms of over 150 attorneys.[87]

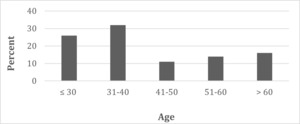

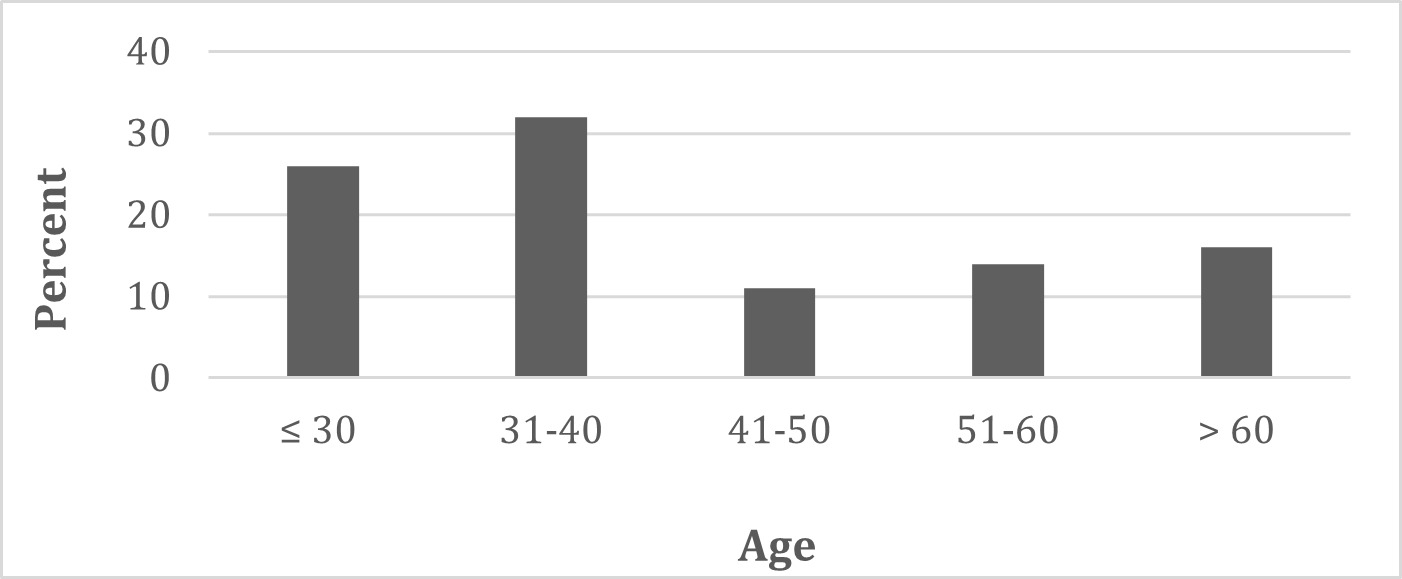

Of the 99 attorneys who indicated their age, 26%were 30 years old or younger; 32% were 31-40; 11% were 41-50; 14% were between 51-60; and 16% were over 60.[88]

2.2 The Samples

Creating sample e-memos for attorneys to review first required me to write sample research questions. To ensure better data, I drafted two questions of differing complexity.[89] The first question—Question “x”—was more complicated and asked an associate to write an e-memo analyzing whether a dog qualifies as a “service animal” under the Americans with Disabilities Act (ADA).[90] Based on a problem I created with Anne Alexander,[91] the partner told the associate that the client has Post-Traumatic Stress Disorder and that petting and holding his dog alleviates the client’s symptoms. In addition to the specific facts, to fully answer the partner’s question, a writer needed to rely on a federal regulation and federal cases.

The second question—Question “y”—was more straightforward and required analyzing a basic statutory issue and applying the law to the client’s facts. [92] Based on a problem created by Melody Daily,[93] the supervisor asked the associate to research and report whether a landowner could bulldoze tombstones located on his property or sell a wrought-iron fence surrounding the cemetery, and, if not, whether the client would be criminally penalized for such actions. The associate needed only to find and analyze statutory authority.

To make the survey manageable for participants, I created four sample e-memos to answer each question. For data purposes, the samples answering the more complex ADA research question are the “x samples”—A-x, B-x, C-x, and D-x.[94] The samples answering the simpler statutory question are the “y samples”—A-y, B-y, C-y, and D-y.[95]

While the four samples in each dataset were substantively similar and contained the same overall conclusions, each sample varied in length, analytical depth, use of citations, and organization. Because the survey’s goal was to test substantive discrepancies in the literature review, the samples did not investigate non-substantive, agreed-upon best practices, such as typography and document design.[96]

To test the discrepancies highlighted in the literature review, each sample roughly reflected one scholar or group of scholars’ advice and samples—which are denoted in the chart below. For example, the A Samples (both A-x and A-y) were the longest and most “traditional.” Mirroring a traditional memorandum’s question presented and brief answer, these samples included a bolded heading for the “issue” and the “answer.” The A Samples also had the lengthiest discussions of legal authority: Sample A-x had two case illustrations and an explanatory parenthetical to a third case before applying the facts to the law; Sample A-y block quoted relevant statutory language while the other y-issue samples merely summarized the statute.

Following the advice of, among others, Kristen Tiscione, Charles Calleros, and Kimberly Holst, I created the C Samples in an attempt to balance a comprehensive legal analysis with concise prose and formatting.[97] In this way, the C Samples best model what the Article later describes in-depth as “iceberg e-memos”—e-memos that have analytical depth but tactically omit information the reader already knows or does not need.[98] And, as discussed in Part 3, attorneys generally favored the C Samples because of this strategic concision.

I created all of the samples to be viable options.[99] Each is meant to be an authentic, acceptable e-memo, not a caricature of scholarly advice. Sample A-x, for example, is not overly verbose, pedantic, or mechanical.

The eight samples are attached as appendices, but below is a synopsis of each.

The 113 respondents who agreed to participate were randomly assigned to review either the four more complex e-memos (the ADA or “x-issue” samples) or the four simpler e-memos (the cemetery or “y-issue” samples).[111] I then sent each respondent the appropriate mock research question and its corresponding sample e-memos. After they reviewed the materials, respondents proceeded to an online survey, which began by asking respondents to rank the samples and provide comments about what they liked and disliked about each.

3. Respondent E-Memo Rankings

Whether reviewing the x-issue or y-issue datasets, respondents most often gave the highest rankings to the “iceberg” C Samples and “traditional” A Samples.[112] Directly refuting the numerous textbook samples that do not include explanation of or citation to the law, the results reveal attorneys prefer substantive e-memos to be just that—substantive. Indeed, the results from both datasets show the vast majority of respondents judged the short D Samples as the worst e-memos.[113]

Crucially, although many respondents favored the longer, more traditional A Samples, others criticized the A Samples’ length and formality. Respondents only ranked the D samples fourth more often.[114] In fact, higher percentages of respondents gave the A Samples a fourth-place ranking than the B and C Samples combined.[115] The “traditional” A Samples, therefore, more than any other samples, show a schism in respondents’ preferences.

To better understand the data, it was thus necessary to do more than review how many respondents gave a particular sample the highest or lowest rank; each sample needed an aggregate score.[116] This was achieved by assigning each ranking a numerical weight inverse to its rank—the higher the ranking, the more weight a choice received.[117] The given weight was then multiplied by the number of respondents who assigned that weight to a particular sample. To achieve a final score for each sample, the products of the multiplied numbers were added together and divided by the number of respondents.[118]

The final calculations established respondents most preferred the C Samples.[119] The final scores between A-x and B-x were virtually tied because although many respondents preferred A-x to B-x, A-x’s aggregate score was reduced by the many attorneys who gave it a low ranking.[120] Yet, the more traditional A-y outranked the more informal B-y despite the seemingly simple legal question answered in the y-issue samples and despite the number of attorneys who gave A-y a fourth-place ranking.[121] Thus even with simple issues, it appears many attorneys prefer detailed answers to quick responses.

Comments by attorneys explaining their rankings elucidate these results. When answering what they liked and did not like about the C Samples (the iceberg e-memos), respondents generally appreciated that the C Samples balanced conciseness with ample reasoning:[122]

-

“This memo had the best treatment of the case law by far for an email memo. There was no superfluous language.”

-

“C was the most streamlined and was the easiest to get a sense of quickly and skim.”

-

“Sample C was very well written and seemed to have all of the information necessary. I liked that it gave the basic information necessary but didn’t include too many analogous case facts, as it seemed from the prompt that the answer could be explained succinctly without a ton of extra information.”

-

“I liked the structure of C and that it struck a good balance between giving me detail and not giving me too much detail. I felt like I could read only this email and not have to read the cases to advise the client. I also liked that it did not use headings for the issue and answer, and the answer was bolded at the start of the email.”

-

“This was my preferred memo because it has the right balance of clearly answering the question asked and summarizing the research in a concise, organized fashion.”

In writing about the A Samples, many respondents praised the A Samples’ thoroughness:

-

“I appreciated the substantially self-contained analysis and support provided in Sample A, which was in my view clearly the best. I also appreciated the visually reinforced structure in that memo.”

-

“A is concise but provides sufficient detail to get a handle on the law.”

-

“Sample A was complete in its analysis and conclusion . . . .”

-

“A was most complete and lawyer-like.”

-

“Memo A was substantially better because it provided a brief, yet thorough, analysis of the relevant law and cases associated with the same. Furthermore, memo A included headings that focused my attention on what I needed to know.”

Other attorneys, however, disliked the A Samples’ formality and length:

-

“Memo A seemed to be clearly the worst, because of how long and formal it was. I shuddered at the thought of reading it on my phone on a break at a deposition.”

-

“Memo A was clearly the worst because it was too long and wordy—overkill.”

-

“[T]oo much information for the project. It takes more time for me to read and digest, so I have to bill the client more time than necessary. It took the associate more time than I can likely justify billing the client.”

-

“[F]ar too long-winded for an email memo. The analysis, application, conclusion/recommendation was overkill. I’d be concerned whether the partner would read the entire email. This sample is better suited for a traditional memo . . . .”

-

“A reads like a law school or traditional memo. Email is not the best form for this style.”

Many respondents enjoyed the B Samples’ simplicity and succinct conclusions, but they frequently criticized the B Samples’ lack of clear explanations. These samples, in other words, are a model of a concise memo gone wrong—one where the writer discloses too little analysis and leaves the reader floundering.[123] As one respondent wrote, “Memo C was an improved version of Memo B. It was exactly what Memo B was missing.” Further comments echoed this sentiment:

-

“Concise. But not enough case support.”

-

“Sample B was maybe just a little bit too brief in its discussion of precedent.”

-

“I don’t like the string citations of case law without more of an explanation.”

-

“Succinct but missing key information.”

-

“[L]acks a thorough analysis . . . and lacks citations to credible sources.”

-

“There also doesn’t seem to be enough analysis and case support in this email. It’s too short. I would question this associate’s conclusion because there’s not enough support provided.”

In reviewing the analyses in the D Samples, respondents lamented that the explanations required readers to do more work to understand the writers’ answers:[124]

-

“D is too cursory[] and does not give comfort that the associate has thoroughly researched the issue.”

-

“D correctly answered the research assignment but left it [to] the requesting attorney to read the attached C.F.R. and cases for a complete understanding of the legal reasoning.”

-

“D did not provide context for the answer or organization to help the partner understand the answer after a quick read.”

-

“Borders on appearing as though the associate didn’t spend enough time or take the project seriously.”

Respondents further wrote that they disliked the D Samples’ lack of more formal, in-line citations and distrusted the authors’ propositions:[125]

-

“The list of citations is useless.”

-

“[I]ncluding legal citations at the bottom is unhelpful without more context. In practice, legal citations are mostly after sentences, and there is not an emphasis on footnote citations. Therefore, the email is unlike what lawyers see on a day-to-day basis.”

-

“[I]t doesn’t really give me any indication of the proposition(s) for which each authority stands.”

Finally, respondents loathed that the D Samples did not provide upfront conclusions.[126] Conclusions, whether “good” or “bad,” must be given up front; readers can handle bad news, but they do not tolerate waiting:[127]

-

“Waiting until the end to give the conclusion was a killer for this sample. It really needed to say it in the first two sentences.”

-

“Disliked: - No answer right off the bat.”

-

“D didn’t have the conclusion up front which is the FIRST thing I teach new associates. Busy partners need to be hit with the bottom line first. So do clients.”

The narrative responses provide color to the rankings, but correlating the data by demographic group exposed a wide gap in preferences between groups. Specifically, further analysis explains the respondents’ divergent views over the merits of the A and C Samples. The next three sub-sections of the Article therefore review the striking schism between attorneys based on their age, the number of e-memos they write, and the size of their law firm.

3.1 Rankings According to Age Group

In ranking either the x-issue or y-issue samples, those over 40 preferred the more traditional A Samples; respondents 40 and below favored the concise but substantive iceberg e-memos—the C Samples.[128] As Tables 3 and 4 illustrate, more than half of respondents over 40 gave A-x and A-y the highest rank.[129]

By contrast, respondents 40 and under significantly preferred the C Samples.[130] Additionally, it was these “younger” attorneys who were divided over the A Samples.[131] In fact, approximately 30% of respondents under 40 gave A-x the lowest possible rank.[132]

This is not to state that those 40 and under are a monolith. Though respondents 40 and younger gave the C Samples the highest scores, respondents 30 and younger preferred the traditional A Samples more often than respondents age 31-40.[133] Those age 31-40 even favored the succinct B Samples to the traditional A Samples.[134]

The distaste for the traditional A Samples thus mostly came from those age 31-40, with the youngest attorneys exhibiting greater acceptance and tolerance for formalism. One explanation for these results is that the youngest practitioners are less confident and more likely to emulate the writing styles of senior practitioners. Similarly, they may be less prone to rebel and reject the traditional format inculcated upon them in law school.[135]

Notably, much of these data have statistical significance,[136] making the results more than a fluke arising from a random sampling of attorneys. To test for statistical significance, the data for the x-issue samples were run through two hypothesis tests:[137] the Chi-Square Test for independence and the Fisher’s Exact Test for independence.[138]

These tests are useful in determining whether the data indicate a trend, such as whether different age groups are generally inclined to prefer certain samples. Below is a breakdown of the statistically significant age data for the x-issue samples:[139]

-

Attorneys over 40 tend to give Sample A-x the best rank more often than those 40 and under.[140]

-

Following this trend, attorneys over 50 tend to give Sample A-x the best rank more often than those 50 and under.[141]

-

Those 40 and under tend to rank Sample A-x the worst and Sample C-x the best more often than those over 40.[142]

-

Those 30 and under tend to give Sample A-x the second-best rank more often than attorneys 31-40.[143]

-

Those 31-40 tend to rank Sample A-x as the worst sample more often than attorneys 30 and under and more often than attorneys over 40.[144]

-

Those 31-40 tend to rank Sample A-x the worst and Sample C-x the best more than those 30 and under and those over 40. Furthermore, those 30 and under and those over 40 more often tend to rank Sample A-x two levels higher than sample C-x more often than those 31-40.[145]

-

Those over 40 tend to give sample D-x the worst ranking more often than those 31-40 and those 30 and under. Those 31-40 tend to assign D-x a better rank than the other groups.[146]

3.2 Rankings According to Number of E-Memos Written Per Month

Similar to the results based on the respondents’ ages, respondents ranked the samples differently based on the number of e-memos they write per month. When comparing those who write less than four e-memos per month with those who write four or more e-memos per month, there was again a break in how respondents viewed the A and C Samples.[147] While the C Samples received the highest overall score regardless of the number of e-memos respondents write per month, respondents who write less than four e-memos per month have a much more favorable view of the A Samples than their more prolific counterparts.

Showing their predilection for the traditional format, 64% of respondents who write less than four e-memos per month assigned Sample A-x with a first- or second-place ranking.[148] Divergently, less than 40% of respondents who write four or more e-memos per month gave A-x such a high score.[149] Regarding the y-issue samples, over 83% of attorneys who write less than four e-memos per month assigned Sample A-y with a first- or second-place ranking, with 0% giving A-y a fourth-place ranking.[150] Attorneys who write four or more e-memos per month had a more favorable view of A-y than they did of A-x, but over 20% of y-issue respondents still gave A-y a fourth-place ranking[151] Therefore, respondents who write more e-memos have a dissonant, if not negative, view of the A Samples—even more so than attorneys 40 and under.[152]

There again is statistical significance to the data when reviewing the x-issue samples, further proving that those who spend their time writing e-memos prefer the conciseness of the C Samples to the formality of the A Samples. Below is a breakdown of the statistically significant e-memo per month data for the x-issue samples:

-

Attorneys who write less than four e-memos per month are more likely to give Sample A-x a higher rank than attorneys who write more than four memos per month.[153]

-

Attorneys who write four or more e-memos per month are more likely to rank C-x higher than A-x more often than attorneys who write less than four e-memos per month.[154]

As to be expected, the data further shows that those who write four or more e-memos per month are disproportionately more likely to be 40 and under.[155] This raised the question of whether the statistically-significant results above were not because of the number of e-memos respondents wrote per month, but because respondents 40 and under generally preferred the C Samples. Put another way, it was necessary to analyze whether the data about preferences and number of e-memos written per month are valid regardless of whether respondents are 40 and under or over 40. A secondary analysis was thus completed and confirmed the above statistical findings are true, regardless of the age of respondents.[156]

While the results in this section are useful in understanding preferences based on how many e-memos attorneys write, future studies should be done to identify the preferences of those who read and supervise e-memos. This will help determine if there is a gap between the preferences of writers and their audience.

3.3 Rankings According to Private Firm Size

There is not a significant difference in sample preferences between those who work in private practice versus those in public practice, with both groups reflecting the overall rankings expressed above.[157] There is a difference, however, among respondents at private law firms of different sizes.[158] Yet again, the respondents’ preferences split between the A Samples and the C Samples.

Respondents who work at firms of over 50 attorneys favored the C Samples, demonstrating a “big law” preference for substantive brevity.[159] Respondents took a more conservative view at “smaller firms,” where the traditional A Samples received the highest ranks.[160]

The data for the x-issue samples initially did not offer statistically-significant information that would indicate that rank distributions for Sample A-x or Sample C-x are different between practitioners at firms with 50 or fewer attorneys and practitioners from firms with over 50 attorneys. This is likely because the sample size was too small.

Therefore, to further analyze the data for statistical significance, it was necessary to specifically compare if respondents at firms of different sizes ranked Sample A-x higher than C-x or, instead, Sample C-x higher than A-x. This more-tailored comparison shows the following statistically significant data:

Practitioners at a private firm with over 50 attorneys are more likely to rank Sample C-x better than Sample A-x than practitioners at a private firm with 50 or fewer attorneys.[161]

4. Respondent E-Memo Preferences and Habits

After ranking the samples, respondents answered questions about their preferences for writing substantive e-memos, with a focus on discrepancies from the literature review. This included asking respondents about what sample e-memos provided the “best” analytical depth and how much reasoning a writer should include in an upfront conclusion. The study then asked respondents about habits in the workplace, such as the typical turnaround time for an e-memo and what mediums supervisors use to read memoranda.

4.1 Analytical Depth Matters

When asked to rank the samples “simply [for] the depth of legal analysis and nothing else,” respondents greatly favored the A Samples: 83.33% of respondents gave A-x the top rank, while 76.19% gave C-x the second-highest rank. Again, the D Samples were plainly last.[162]

Remarkably, though respondents overwhelmingly acknowledged the A Samples had more apparent analytical “depth,” that alone did not directly translate into the samples’ overall ranks, as seen in the results above. The C Samples, which balanced depth with concision, remained the overall favorite because of the number of respondents who gave the A Samples a third- or fourth-place ranking. Therefore, while depth is valued and recognized, respondents—especially those 40 and under, those who write four or more e-memos per month, and those working at private firms of over 50 attorneys—value it more when the analysis is also concise.[163]

4.2 Upfront Conclusions Need Reasoning

The study next asked respondents to review sample conclusions. As seen in Appendix 11, respondents who reviewed the x-issue samples could choose between two sample conclusions, each with differing levels of reasoning, or to have no upfront answer. Those responding to the y-issue samples reviewed two samples with differing levels of reasoning and a third sample that provided no reasoning at all.[164]

Conflicting with most textbook samples, the results show that respondents not only preferred conclusions with reasoning, they preferred the reasoning be detailed.[165] Not one respondent stated an initial answer was unnecessary in the x-issue samples, and 90.91% of the attorneys responding to the y-issue samples gave the lowest rank to the most conclusory answer with no reasoning.[166]

One scholar predicted such results, stating the upfront conclusion should be an “answer with reasons.”[167] This can be a “single, short paragraph,” or “you can write the answer and give the reasons in bullet points.”[168] This advice follows what we so often teach: Legal reasoning is the meeting of law and facts. Only when the two are combined does the reader have a true answer.

Legal analysis requires constantly answering “why” and responding with an instructive “because.” This point is particularly true for the upfront answer in an e-memo, which is read by an impatient reader who needs the major and minor premises of the writer’s conclusion articulated from the start.[169] If a writer is to be believed, especially a novice writer, their credibility must be immediately earned with clear reasoning.[170] After all, because the upfront conclusion in an e-memo is an adaption of the traditional memoranda’s brief answer, such initial reasoning is customary.[171]

4.3 Applications Are Necessary

Part 1 explained there is currently little scholarship regarding how to apply facts to law in e-memos.[172] To partially address this gap, the study asked respondents the importance of the x-issue and y-issue samples’ applications.[173] Respondents could choose “important,” “somewhat important,” “not important,” or “other.”[174]

As to be expected, a significant majority of respondents who reviewed the more complex x-issue samples—75%—stated the application was “important.”[175] Not one respondent stated the application was “not important.”[176] With the simpler y-issue samples, the application might seem a bit perfunctory; the writer’s sole contribution was that the client’s facts met a statutory definition. Even so, as seen by Table 24, over 83% of y-issue respondents deemed the application “important.” Again, not one respondent stated the application was “not important.”[177]

When assigning students e-memo problems, therefore, no matter the research question’s complexity, professors must ensure students are including an application of facts to the law.[178] Even in e-memos answering basic, procedural research questions, if the question references a specific (or even hypothetical) client, applications matter.[179]

4.4 Attorneys Use Explanatory Parentheticals

Supporting the advice from the literature review and respondents’ general preference for the C Samples, attorneys rely on explanatory parentheticals when providing caselaw within e-memos. As shown in Table 25, over 42% of the 96 respondents answering this question stated explanatory parentheticals are used “very frequently” or “frequently” in e-memos.

Legal writing professors must teach the art of writing explanatory parentheticals.[180] Having students draft and redraft parentheticals can be done side-by-side with asking students to draft case illustrations, as parentheticals are a condensed form of case illustrations.[181]

4.5 Preferences Towards Formal Citations Are Complicated

With traditional memoranda, it is general knowledge that authority must back each sentence needing support. There is no such consensus about when and how a writer should cite in an e-memo.[182] Not only are there discrepancies in scholarly advice, but respondents also lacked unity about the importance of formal citations in e-memos.

When asked “[h]ow important” “formal Bluebook citations” are in e-memos, just 4% of respondents selected “very important.”[183] Seventeen percent choose “important,” 35% responded “somewhat important,” and 35% answered “not important.”[184]

The relative lack of concern or agreement for proper citations might rest in the wording of the question and the term “formal Bluebook citations.” It also could rest with the question existing in a vacuum and not being tied to a sample document. Attorneys often view proper legal citation as a “necessary evil”—a tedious exercise in memorizing gnostic knowledge.[185] In practice, this view is unhelpful to writers, readers, and law students; legal citation is a core convention of the law.[186]

When respondents earlier commented about the e-memo samples, many criticized the D Samples, and to a lesser extent, the B Samples, for missing citations or crucial citation information.[187] In contrast, not one survey respondent disparaged the A or C Samples for citing per The Bluebook’s or the ALWD Guide to Legal Citation’s rules and including pinpoints to specific pages. True, there is a clear delineation between perfect citations and unusable ones, but telling students that citations are “somewhat important,” that e-memos do not need to use “formal, full-form citations,”[188] or that e-memo writers can cite “less frequently”[189] creates an amorphous and unworkable standard. It is better for new writers to assume a high degree of formality in their citations.[190]

Even in “informal” e-memos, proper in-line citations[191] are indispensable guideposts, rapidly providing thoughtful readers with information about the value of the writer’s propositions.[192]

As compared to Bryan Garner’s preferred footnoted citations,[193] in-line citations are particularly advantageous in e-memos and other documents read on a screen.[194] Readers using a computer, tablet, or phone should not be forced to scroll down to the bottom of a document to check the efficacy of a proposition and then back up again to continue reading.[195] In-line citations are not, as Garner claims, “speed bumps” interrupting a reader’s thought process.[196] Rather, properly-formatted, in-line citations within e-memos give skeptical and busy readers the proof they need and the conciseness they desire.[197]

Respondents’ use of citation signals further highlights the importance of fashioning proper citations in e-memos.[198] Over 60% of respondents indicated they or those they supervise use citation signals in e-memos “moderately,” “frequently,” or “very frequently.”[199]

Citation signals might be even more important in e-memos than in traditional memoranda. Because e-memos must be exceedingly concise, squeezing more information into each sentence—and each citation—is critical. Signals are especially beneficial in e-memos then; they quickly give meaning and context to a citation, telling a reader precisely how the source supports or contradicts the writer’s proposition.[200]

These data complement the importance of explanatory parentheticals, which are often needed in conjunction with signals to explain a source’s relevance.[201] Professors should therefore consider teaching rudimentary signals (e.g., see, see also) and explanatory parentheticals together.

4.6 Attachments and Hyperlinks Are Useful

As seen by Table 28, the majority of respondents—53%—reported “it is common practice to electronically attach” cases and statutes to e-memos. An additional 11% agreed that although attaching applicable law is not yet common practice at their workplace, it “should” become one.[202]

In addition to attaching applicable law, one suggestion professors might make to students is to highlight the portions of an opinion they directly quote or paraphrase in their e-memo. The highlights will save a skeptical reader time and better direct the reader than a pinpoint citation alone.

When it comes to hyperlinking citations, about half of the 76 respondents answering this question agreed that hyperlinking is or “should be” common practice.[203] This information supports the argument that hyperlinking is becoming a general trend in legal writing.[204] Some courts explicitly state they prefer hyperlinking in briefs and other court filings because hyperlinking allows readers to immediately verify an attorney’s argument.[205]

4.7 Readers Rely on Computers Screens

One of the last survey questions asked how supervising attorneys read office memoranda of any kind—not just e-memos. Respondents could choose as many options as applied.

Of the 88 attorneys who responded, 88.64% stated supervisors read memos on their computers.[206] Almost 60% of respondents stated supervisors still read memoranda in print.[207] Phones were the third most-used medium, with nearly 40% of supervisors sometimes reading memoranda on the small screen.[208]

Respondents next ranked the methods supervising attorneys use “the most for reading legal memoranda” and were asked to skip the question if they did not know the answer. Seventy-five attorneys responded. Sixty percent of respondents stated computer screens are the most used method for reading memoranda.[209] Print came in second, with 32%.[210] Only 8% of respondents said tablets or phones were the primary methods used by supervisors.[211]

The results are much closer when reviewing the answers of the 75 respondents who indicated supervisors’ secondary means for reading memoranda. While computer screens again took the top spot (32%), print (28%) and phones (26.67%) were close behind.[212]

4.8 E-Memos Are Quick-Turnaround Projects

Even as e-memos are rigorous writing projects, the general expectation is that an associate will research, draft, edit, and complete an e-memo within one to two days. About half of respondents—49.48%—stated the typical turnaround time for an e-memo is within 24 hours. Nearly all respondents—91.75%—said the typical turnaround time is within 48 hours.[213]

4.9 Traditional Memoranda Are Not Dead in Private Practice

Much of the scholarly debate over e-memos has rightly focused on whether the e-memo explosion has eviscerated the traditional memorandum.[214] According to Tiscione’s study, around 40% of her respondents wrote no traditional memoranda in a year.[215] An overwhelming majority—75%—wrote no more than three traditional memoranda per year, and around 87% wrote no more than six.[216] Only a dismal 4% wrote more than 20.[217] Tiscione, therefore, posits that traditional memoranda are “all but dead.”[218] My survey instead suggests that the demands of modern practice have merely hobbled traditional memoranda.[219]

As seen by Table 33, of the 100 respondents answering this series of questions in my survey, just 17% stated they do not write traditional memoranda. And just a slight majority—54%—stated they write no more than five traditional memoranda per year.[220] Further, 10% actually write 31 or more traditional memoranda per year.[221]

In addition to writing more traditional memoranda, respondents to my survey also wrote far more non-traditional memoranda—including e-memos—than respondents to Tiscione’s survey. Thirty-five percent of my respondents wrote or assigned more than 20 non-traditional memoranda per year.[222] In Tiscione’s survey, by comparison, approximately 25% wrote more than 20 informal memoranda, and just around 19% assigned more than 20.[223] There is an even starker distinction when looking at those who wrote or assigned the fewest non-traditional memoranda. Only 19% of my respondents wrote or assigned five or fewer informal memoranda per year.[224] Approximately 34% of Tiscione’s respondents, however, wrote six or fewer non-traditional memoranda per year, and approximately 47% assigned six or fewer per year.[225]

There are a number of possibilities that might explain the differing results between Tiscione’s survey and my own, including disparities in respondent demographics and more than a decade between when we conducted the studies. Still, respondents in both studies share much in common: 69% of Tiscione’s respondents practiced private law,[226] while 64% of my respondents are in private practice.[227] Similarly, the greatest percent of Tiscione’s respondents worked at a firm with over 200 attorneys,[228] and the greatest percent of my respondents in private practice worked in firms of over 150 attorneys.[229]

And, even when singling out my respondents working in firms of over 150 attorneys, over 40% of respondents stated they wrote or supervised more than five traditional memoranda per year.[230] Further, although traditional memoranda can be expensive, in firms of 50 or fewer attorneys, again, over 40% of my respondents wrote or assigned more than five traditional memoranda per year.[231]

5. Curricular Implications

Adding any single e-memo assignment to first-year legal writing courses is not enough. E-memos come in a variety of vintages,[232] including summaries of traditional memoranda[233] and “procedural” e-memos.[234] Ideally, students will complete these types of e-memo assignments during their studies.[235] Still, limited course hours dictate choosing first-year assignments that mirror practice and provide students a chance to build their analytical muscles.[236] For this reason, professors teaching first-year legal writing courses should consider standalone, substantive e-memo assignments similar to the research questions used in this study. And they should contemplate reorganizing their course curriculum to include more short assignments.[237]

A complete discussion on how courses might be formatted in the future will be discussed in a future article, but one reasonable reform is assigning students an e-memo as their first writing assignment.[238] Before discussing the unfamiliar components of a traditional memorandum, the assignment introduces students to a recognizable means for developing legal analysis.[239] With little instruction regarding format and just one judicial opinion to provide applicable law, students draft a short email analyzing a client’s situation. Immediately, students in the legal writing classroom are reading, writing, and creating like a lawyer. Just as notably, they are introduced to legal writing as the process of forming legal analysis, not mimicking a rigid format.[240]

5.1 Choosing E-Memo Samples

When setting goals and objectives for substantive e-memo assignments, we come back to the ultimate question the study set out to answer: How should an e-memo be formatted and written? The overall preference for the A and C Samples means examples similar to these should serve as training models. Indeed, students should review and critique samples of varying length, depth, and formatting. But students should particularly scrutinize e-memos analogous to the A and C samples and learn about the disharmony trilling between tradition and innovation.[241]

If we must signal to students a “best” e-memo to emulate, a reasonable solution is to place significant emphasis on the C Samples. Many respondents appreciated the A Samples’ thoroughness and more conventional format,[242] but—critically—those who regularly write e-memos chose the C Samples.[243] The C Samples further provide students the best example for incorporating explanatory parentheticals, essential instruments for modern practice. Moreover, while the A Samples were some practitioners’ favorite e-memos, many respondents despised their length and formality.[244] There was no such animosity towards the C Samples.[245] Consequently, the C Samples are the safest benchmark for an aspiring attorney.

There is, of course, the age divide between how attorneys ranked the A and C Samples, but this split makes sense. Senior practitioners are more attached to legal writing that matches their law school experience; younger attorneys are more accustomed to writing shorter messages with digital devices.[246] But even if senior attorneys prefer the more traditional e-memo, “digital natives” are quickly becoming the supervisors.[247]

For now, our students must be “bilingual,” altering e-memo style depending on their reader’s preferences.[248] Yet our courses cannot ignore the trend towards conciseness for two reasons. First, because writing memoranda already makes students fluent in tradition, it is imperative our e-memo assignments teach the nuances of succinct yet incisive e-memos.[249] Second, e-memos are sent to a targeted audience as part of an ongoing conversation.[250] Presumably, the intended reader already knows the issues, the basics of the law, and the steps of legal logic. The inherent nature of email thus favors moving towards e-memos closer to the C Samples.

5.2 Teaching Students with the Help of Practicing Attorneys

As students progress through their first semester, professors can formally introduce substantive e-memo assignments. The first formal e-memo exercise can come after the initial traditional memorandum assignment and might either present the students with a new client or have a previous client ask a question corollary to a previous problem. For example, if the client for the traditional memorandum needed to assess their liability for false imprisonment, a follow-up e-memo assignment could ask students to assess whether the client’s conduct rose to the level of “extreme and outrageous conduct”—without asking students to consider the other elements of intentional infliction of emotional distress. Thus, while students would be operating in a familiar universe of facts, the e-memo assignment would ask students to conduct independent legal research and write a standalone legal analysis.

An assignment I have found useful in teaching substantive legal analysis relies on the help of friends and colleagues in practice. After completing two class periods of instruction and exercises, students receive an email from their “supervisor” asking them to complete a substantive e-memo within 48 hours. But, importantly, to give students a taste of legal practice, the assignment does not come from me: It comes from a practicing attorney.

Before students ever receive their assignment, I provide research questions, sample answers, and checklists to a group of reliable and ethical lawyers. These attorneys then email the students with my research question and a request for an e-memo response within 48 hours.[251] To help students and ease their nerves, I tell students they can first email me their e-memo to receive general critiques before sending their assignment to their “supervisor.”

Writing for attorneys gives each student a desire to excel in their work and a contact practicing in an area of the student’s interest. If a student says they want to explore working at a large firm in environmental law, I can assign the student a supervising attorney who does just that. If the student is interested in working for the public defender’s office, I can email a former colleague. Some students have even gone on to complete internships or begin careers with their attorney-contact’s practice.

The exercise has always been a success; students treat the e-memo with urgency and practitioners praise the assignment’s realism and the students’ work products.[252] Crucially, the assignment has given students an enhanced sense of audience and a chance to write a document that is part of direct conversation with an informed reader. It has given students a chance to create an “iceberg e-memo.”

5.3 The Iceberg E-Memo

As Tiscione noted, email is a “cool” medium—one that requires the audience’s “participation or completion.” [253] Email is often part of a dialogue among disciplinary experts.[254] It asks a reader to “fill in gaps” that would clearly (and perhaps repeatedly)[255] be expressed in a traditional memorandum.[256] In this way, the e-memo’s rhetoric should mirror Ernest Hemingway’s “Iceberg Theory” of writing.[257]

According to Hemingway, a writer who truly “knows enough of what [they are] writing about” may omit explicit themes, yet a knowledgeable reader will still understand the writing’s meaning.[258] Like most of an iceberg’s mass, some information might be submerged beneath the surface.[259] But the writer’s points will resonate truly with the reader because the writer will carefully choose what information to include and, just as importantly, to exclude.[260] If the writer, however, does not understand the subject matter or have confidence in her work, her omissions will strip the writing of clear meaning.[261] She will render her work ineffective.[262]

The Iceberg Theory is acutely applicable for those writing e-memos; Hemingway fostered his discipline “to prune language and avoid waste motion” by writing short stories.[263] With the “Iceberg Theory of e-memo writing,” the writer may omit known facts, clearly understood logical steps, and overly-duplicative conclusions—while still providing sharp substantive analysis. Elegance replaces redundancies.

Thus, a proper e-memo is not less rigorous than a memorandum;[264] it is perhaps even more so. Writers must consider not only what to include but also what to exclude.[265] Writing such expertly-crafted documents requires the writer have empathy for her reader and the self-restraint not to announce all that the writer knows.[266]

Likewise, the analysis in iceberg e-memos (such as the C Samples) is not shallower than the “deep” analysis of a traditional memorandum.[267] There should be no meaningful difference between the analytical depth of a memorandum and a well-crafted e-memo.[268] The only distinction is the reader’s expectation of how much information is appropriately submerged.[269]

This kind of thoughtful omission can occur only when the writer has internalized the law, just as Hemingway’s aesthetic was grounded in writing based on personal experiences.[270] If an e-memo drafter “writes what she knows,” she can write in assertive, straightforward prose. For example, a writer can omit superfluous facts in a case explanation. She can omit information that muddles her analysis and fails to help a reader understand the conclusion. In other words, a writer determined to model an iceberg e-memo will understand the difference between (1) a case explanation written like a book report exclaiming her own knowledge and (2) an extraction of key information crafted to benefit the reader.

With this necessary focus on key information, iceberg e-memos force a writer to concentrate on the fundamentals of good legal writing.[271] Thesis sentences, headings, and deliberate reasoning are as crucial as ever.[272] By comparison, Hemingway knew how to write selective statements, reiterating “key phrases for thematic emphasis.”[273] He repeated key words, finding “a synonym strains the writer’s eyes and the reader’s ears.”[274] Similarly, an iceberg e-memo’s writer makes each sentence punch with meaning, repeating key legal terms of art without fear that she should consult a thesaurus.[275] The writer carries key words throughout the paper, using them in her thesis sentences for emphasis and structure. She invokes them like an incantation, quickly letting her reader know when the legal definition is met—and why.

Hence, iceberg e-memos are like a martial artist’s devastating jab: quick, precise, and powerfully understood upon impact.[276] The conciseness of proper e-memos teaches the writer to get to the point without hesitation, without clumsy, meandering movement that diminishes what could otherwise be a concussive blow.[277] E-memos teach accuracy.

6. Conclusion

There is no silver bullet for writing substantive e-memos. No sample is a panacea. But the results of this study confirm the foundational points of past scholarship, and provide additional, practical guidance for teaching and drafting e-memos. E-memos, with their necessarily concise format, create the ability to share complex ideas in straightforward terms. Moreover, e-memos provide the academy a chance to teach students to effectively provide clients with cheaper and more accessible documents, thus delivering greater access to justice.

E-memos, of course, have their shortcomings. They tempt the writer to skip logical steps and provide weak analyses without support. If professors choose to teach e-memos, they should consider sharing multiple e-memo samples and emphasizing the pitfalls of writing e-memos without sufficient reasoning. Professors might also share with students that no single e-memo assignment encompasses the medium—no one assignment is the end-all-be-all. But substantive e-memos deserve our attention and perhaps a place—if not multiple places—in our curriculum. The data and samples here thus might serve as a new checkpoint in our ongoing discussion of how to best prepare students for practice as our pedagogy continues to evolve.

Appendices

Appendices 1-12 can be downloaded here.

See Matthew Kirschenbaum, How Technology Has Changed the Way Authors Write, The New Republic (July 26, 2016), https://newrepublic.com/article/135515/technology-changed-way-authors-write; Christina Haas, Writing Technology, Studies on the Materiality of Literacy ix (2013).

See Harold A. Innis, Empire and Communications 35-36, 138-39 (2007) (examining how the movement from clay tablets to papyrus and then from papyrus to parchment changed writing); Ellie Margolis, Is the Medium the Message?, 12 Legal Comm. & Rhetoric: J. ALWD 1, 7-8 (2015) (discussing how legal writing changed with the advent of word processers).

See Thomas Hills, The Evolution of the Written Word, Psychology Today (Dec. 28, 2016), https://www.psychologytoday.com/us/blog/statistical-life/201612/the-evolution-the-written-word (describing how language has become easier to learn and communicate over time, including American English over the last 200 years).

Kristen K. Robbins-Tiscione, From Snail Mail to Email: The Traditional Legal Memorandum in the Twenty-First Century, 58 J. Legal Educ. 32, 48-49 (2008) [hereinafter Robbins-Tiscione, Snail Mail]; Katrina June Lee, Process Over Product: A Pedagogical Focus on Email as a Means of Refining Legal Analysis, 44 Cap. U. L. Rev. 655, 664 (2016); Margolis, supra note 2, at 9; Ann Sinsheimer & David J. Herring, Lawyers at Work: A Study of the Reading, Writing, and Communication Practices of Legal Professionals, 21 Legal Writing: J. Legal Writing Inst. 63, 79 (2016) (presenting the results of a three-year ethnographic study of attorneys in the workplace and concluding that junior associates spend an enormous amount of time reading and drafting emails).

Margolis, supra note 2, at 4 (noting the “entrenchment” of the traditional memorandum in the twentieth century); see also Kirsten K. Davis, “The Reports of My Death Are Greatly Exaggerated”: Reading and Writing Objective Legal Memoranda in a Mobile Computing Age, 92 Or. L. Rev. 471, 498-99 (2014) (providing a history of traditional memoranda).

Nancy L. Schultz & Louis J. Sirico, Jr., Legal Writing & Other Lawyering Skills 223 (6th ed., 2014) (“Increasingly, short emails are replacing the traditional ten-to fifteen-page memo. In your professional career, you will compose far more emails than memos.”); see Kristen K. Tiscione, The Rhetoric of E-mail in Law Practice, 92 Or. L. Rev. 525, 538 (2013) (“Email is the concentrate, the reduction, the essence, but by no means a summary of, a traditional memorandum.”) [hereinafter Tiscione, Rhetoric].

This Article accepts Kirsten Davis’ definition of a traditional memorandum as a document that “contains most or all of the following parts: question presented, brief answer, statement of facts, discussion, and conclusion.” See Davis, supra note 5, at 482.

Helene S. Shapo, Marilyn R. Walter & Elizabeth Fajans, Writing and Analysis in the Law 167 (7th ed. 2018) (“[Emails] are less expensive in terms of billing and they accommodate the recipient’s need for a fast response.”); Robbins-Tiscione, Snail Mail, supra note 4, at 36; Joe Fore, The Comparative Benefits of Standalone E-Mail Assignments in the First-Year Legal Writing Curriculum, 22 Legal Writing: J. Legal Writing Inst. 151, 152 (2018).

See Margolis, supra note 2, at 9 (defining an e-memo as legal analysis sent “directly in the body of [an] email,” rather than as an attachment).

Compare Davis, supra note 5, at 487 (arguing e-memos run the risk of giving readers “poorly thought-through legal analysis”), with Tiscione, Rhetoric, supra note 6, at 539 (rebutting Davis by stating that “decisions that go into email are no less deliberative than those in memoranda”).

Sherri Lee Keene defines “conciseness” as such:

When legal professionals refer to conciseness, they are not only speaking of the length of the document, but also of its ability to keep the reader focused on pertinent information and to explain why this information is important. While brevity is a worthwhile goal . . ., conciseness requires not only that the writer use an efficient writing style, but also that the writing be narrowly focused.

One Small Step for Legal Writing, One Giant Leap for Legal Education: Making the Case for More Writing Opportunities in the “Practice-Ready” Law School Curriculum, 65 Mercer L. Rev. 467, 478 (2014) (footnote omitted).

See Margolis, supra note 2, at 7-8 (discussing how writing on a screen allows a writer to instantly delete and move text, thus making “less at stake” and creating the possibility that writers “will not think through the analysis as thoroughly” as when “making corrections was more cumbersome”).

See Richard C. Wydick, Plain English for Lawyers 7-22 (5th ed. 2005); Bryan A. Garner, Legal Writing in Plain English: A Text with Exercises 24-25 (2d ed. 2013) [hereinafter Garner, Plain English].

A.B.A. Sec. of Legal Educ. & Admis. to the Bar, Leg. Educ. and Pro. Dev.—An Educ. Continuum, Report of the Task Force on Law Schools and the Profession: Narrowing the Gap (1992); William M. Sullivan, Anne Colby, Judith Welch Wegner, Lloyd Bond & Lee S. Shulman, Educating Lawyers: Preparation for the Profession of Law (2007) [hereinafter Carnegie Report]; Roy Stucky et al., Best Practices for Legal Education: A Vision and a Roadmap (2007) [hereinafter Stucky, Best Practices].

See Best Practices, supra note 14, at 13 (“[L]aw schools are simply not committed to making their best efforts to prepare all of their students to enter the practice settings that await them.”); Margaret Martin Barry, Practice Ready: Are We There Yet?, 32 B.C. J.L. & Soc. Just. 247, 250 (2012) (“Despite almost a century of critique that this approach does not provide enough preparation for the profession, law schools have been reluctant to substantially modify it.”); Jason G. Dykstra, Beyond the “Practice Ready” Buzz: Sifting Through the Disruption of the Legal Industry to Divine the Skills Needed by New Attorneys, 11 Drexel L. Rev. 149, 184-85 (2018) (criticizing law schools’ “illusory” “efficacy” for “merely affix[ing] a ‘practice ready’ moniker upon existing course offerings”).

The Carnegie Report highlights the important work done in legal writing classrooms, stating, “[T]he best legal writing classes we encountered focused on learning tasks that are typical of legal work.” Carnegie Report, supra note 14, at 105. The Report goes on to state that legal writing courses cover “critical skills of legal practice that receive little or no attention in” first-year casebook classrooms and that students in legal writing are “beginning to cross the bridge from legal theory to professional practice.” Id. But see Lisa T. McElroy, Christine N. Coughlin & Deborah S. Gordon, The Carnegie Report and Legal Writing: Does the Report Go Far Enough?, 17 Legal Writing: J. Legal Writing Inst. 279, 281 (2011) (criticizing the Carnegie Report for failing to recognize legal writing professionals’ expertise in following best educational practices).

Mary Beth Beazley details how legal writing professors and courses have spurred curricular innovations because, among other reasons, legal writing professors have the “outcome-based goal of making good writers out of all of their students” and there is a stronger connection in legal writing courses between teaching methods and student performance than in casebook courses. Better Writing, Better Thinking: Using Legal Writing Pedagogy in the “Casebook” Classroom (Without Grading Papers), 10 Legal Writing: J. Legal Writing Inst. 23, 30-31 (2004); see also Kirsten A. Dauphinais, Sea Change: The Seismic Shift in the Legal Profession and How Legal Writing Professors Will Keep Legal Education Afloat in Its Wake, 10 Seattle J. Soc. Just. 49, 104 (2011) (stating legal writing professors are leaders in pedagogical methodology); Susan Hanley Duncan, The New Accreditation Standards Are Coming to a Law School Near You—What You Need to Know About Learning Outcomes & Assessments, 16 Legal Writing: J. Legal Writing Inst. 605, 611 (2010) (hypothesizing that legal writing professors will make “natural leaders” in meeting new accreditation standards); Keene, supra note 11, at 497 (“Legal writing scholars have published a wealth of scholarly articles about how to teach writing and give feedback effectively, and there are a number of scholarly journals that focus specifically on legal writing teaching pedagogy.”).

Ass’n of Legal Writing Dirs. & Legal Writing Inst., Report of the Annual Legal Writing Survey 2015, at xi, 13, https://www.alwd.org/images/resources/2015 Survey Report (AY 2014-2015).pdf (reporting that 65% of responding legal writing programs use email assignments) [hereinafter Report]; see Fore, supra note 8, at 154-57 (2018) (summarizing the shift from “traditional” memoranda to e-memos in practice and the challenges and benefits of implementing such changes in the classroom).

See Fore, supra note 8, at 153 (stating “email assignments should be an integral part” of the legal writing curriculum and more than summaries of a “larger memo assignment”).

See Kristen K. Robbins-Tiscione, A Call to Combine Rhetorical Theory and Practice in the Legal Writing Classroom, 50 Washburn L. J. 319, 337 (2011) (“Combining rhetorical theory and practice in the legal writing classroom is integrative because it treats each aspect of law as inseparable from the other.”). Cf. Brent E. Newton, Preaching What They Don’t Practice: Why Law Faculties’ Preoccupation with Impractical Scholarship and Devaluation of Practical Competencies Obstruct Reform in the Legal Academy, 62 S.C. L. Rev. 105, 126 (2010) (“[L]aw professors increasingly have felt the need to prove themselves as legitimate academicians in the university lest they be perceived as mere teachers at a trade school.”).

See generally Robbins-Tiscione, Snail Mail, supra note 4, at 46-49 (presenting the results of a study surveying Georgetown graduates about how they convey legal analysis and how they believe predictive writing should be taught in the classroom).

Previous articles have done studies to highlight the importance of e-memos in law practice. See Sheila F. Miller, Are We Teaching What They Will Use? Surveying Alumni to Assess Whether Skills Teaching Aligns with Alumni Practice, 32 Miss. C. L. Rev. 419, 434-35 (2014); Sinsheimer & Herring, supra note 4, at 78-79; Susan C. Wawrose, What Do Legal Employers Want to See in New Graduates? Using Focus Groups to Find Out, 39 Ohio N.U. L. Rev. 505, 538-39 (2013). This Article contributes to past scholarship by reviewing how attorneys write e-memos.

The focus of this study is limited to variations in how attorneys write substantive e-memos that answer research questions requiring application of facts to the law. It is not concerned with summaries of longer memorandum or “procedural e-memos” that provide straightforward information where there is less analysis and therefore less variation between competent writers’ finished products. See Fore, supra note 8, at 276-77 (noting procedural e-memos require little predictive analysis); see also Jennifer Will, Call It an E-Convo: When an E-Memo Isn’t Really a Memo at All, 24 Legal Writing: J. Legal Writing Inst. 269, 278 (2020) (calling procedural e-memos a “sort of perfunctory information exchange”). At the same time, this Article is no way criticizing the practical utility or pedagogical need to teach summaries or procedural e-memos. Both, especially procedural e-memos as excellently described by Fore, are necessary for practitioners and students.

Ellie Margolis & Kristen Murray, Using Information Literacy to Prepare Practice-Ready Graduates, 39 U. Haw. L. Rev. 1, 15 (2016); Lee, supra note 4, at 655 (explaining that attorneys in the late 1990s and early 2000s “became self-taught experts” on email communication).

See, e.g., Daniel L. Barnett & Jane Kent Gionfriddo, Legal Reasoning & Objective Writing: A Comprehensive Approach (2016); Charles R. Calleros & Kimberly Holst, Legal Method and Writing I (8th ed. 2018); J. Scott Colesanti, Legal Writing, All Business (2016); Alexa Z. Chew & Katie Rose Guest Pryal, The Complete Legal Writer (2016); Christine Coughlin, Joan Malmud Rocklin & Sandy Patrick, A Lawyer Writes: A Practical Guide to Legal Analysis (3d ed. 2018); Linda H. Edwards, Legal Writing and Analysis (5th ed. 2019); Elizabeth Fajans, Mary R. Falk & Helene S. Shapo, Writing for Law Practice: Advanced Legal Writing (3d ed. 2015); Richard K. Neumann, Ellie Margolis & Kathryn M. Stanchi, Legal Reasoning and Legal Writing (8th ed. 2017); Laurel Currie Oates & Anne Enquist, Just Memos: Preparing for Practice (5th ed. 2018); Wayne Schiess, Writing for the Legal Audience, (2d ed. 2014) [hereinafter Schiess, Legal Audience]; Schultz & Sirico, supra note 6; Shapo, Walter & Fajans, supra note 8; Christopher D. Soper, Cristina D. Lockwood, Bradley G. Clary & Pamela Lysaght, Successful Legal Analysis and Writing: The Fundamentals (4th ed. 2017); Kristen Konrad Tiscione, Rhetoric for Legal Writers: The Theory and Practice of Analysis and Persuasion (2d ed. 2016) [hereinafter Tiscione, Rhetoric for Legal Writers].

See, e.g., Charles Calleros, Traditional Office Memoranda and E-mail Memos, in Practice and in the First Semester, 21 Perspectives: Teaching Legal Res. & Writing 105, 105 (2013), http://info.legalsolutions.thomsonreuters.com/pdf/perspec/2013-spring/2013-spring-3.pdf [hereinafter Calleros, Traditional].

See, e.g., Wayne Schiess, How to Write an E-mail Memo, Legalwriting.net (Dec. 8, 2014), http://sites.utexas.edu/legalwriting/ 2014/12/08/how-to-write-an-e-mail-memo/ [hereinafter Schiess, How to Write].

Calleros, Traditional, supra note 26, at 108; Calleros & Holst, supra note 25, at 121.

Robbins-Tiscione, Snail Mail, supra note 4, at 34-35 (arguing that adhering strictly to the traditional memoranda format risks elevating form over substance); id. at 35 (“Although well-established, the traditional memorandum is not in itself a purpose for writing, and it should give way to a more purpose-driven approach to teaching written analysis.”). See generally Lee, supra note 4, at 668-69 (providing an example of an e-memo assignment that emphasizes process over product).

Ellie Margolis & Susan L. DeJarnatt, Moving Beyond Product to Process: Building a Better LRW Program, 46 Santa Clara L. Rev. 93, 99 (2005).

See Tracy Turner, Flexible IRAC: A Best Practices Guide, 20 Legal Writing: J. Legal Writing Inst. 233, 269-70 (2015) (observing that many professors dislike providing sample briefs or memoranda “out of fear that students will slavishly copy aspects of the paradigm or sample that do not make sense in a particular situation”).

See Judith B. Tracy, “I See and I Remember; I Do and Understand”: Teaching Fundamental Structure in Legal Writing Through the Use of Samples, 21 Touro L. Rev. 297, 306-07 (2005) (“The writer’s task is to convert the analytical process into a structure . . . .”).

See Katie Rose Guest Pryal, The Genre Discovery Approach: Preparing Students to Write Any Legal Document, 59 Wayne L. Rev. 351, 375 (2013) (“[G]enre is a thing that exists in a recurring situation with an audience, context, and needs, with conventions that arise in response to this recurring situation.”) (footnote omitted).

Calleros, Traditional, supra note 26, at 107 (noting a summary of the assignment at the beginning of an e-memo may replace the traditional issue statement); Coughlin, Rocklin & Patrick, supra note 25, at 322-23 (opining the introduction, which replaces the question presented and brief answer, “should include a short statement about the issue that the email addresses and your conclusion or proposed resolution”); Schiess, How to Write, supra note 27 (stating an e-memo should start with the question asked instead of skipping “right to the answer” because that could frustrate “secondary readers” or the “assigning lawyer who’s reading the e-mail days or weeks later”); Schultz & Sirico, supra note 6, at 226 (advising writers to “begin with a brief summary of the question”); Soper, Lockwood, Clary & Lysaght, supra note 25, at 113; Tiscione, Rhetoric for Legal Writers, supra note 25, at 135.

See, e.g., Calleros, Traditional, supra note 26, at 107; Calleros & Holst, supra note 25, at 121; see also Coughlin, Rocklin & Patrick, supra note 25, at 388 (stating both traditional memos and e-memos “convey the major points of the legal analysis and the general structure of that analysis will likely be the same”).

See, e.g., Calleros, Traditional, supra note 26, at 107; Coughlin, Rocklin & Patrick , supra note 25, at 324 (stating that an e-memo’s focus should be on explaining “the relevant rules” and that a writer should generally “omit case illustrations” in favor of explanatory parentheticals"); Neumann, Margolis & Stanchi, supra note 25, at 173; Oates & Enquist, supra note 25, at 228 (positing that case descriptions should be “shorter, and parentheticals [] more common”); Schiess, How to Write, supra note 25 (remarking there is “usually no space” for a case illustration so “leave it out”).

See, e.g., Calleros, Traditional, supra note 26, at 107; Coughlin, Rocklin & Patrick, supra note 25, at 327; Tiscione, Rhetoric for Legal Writers, supra note 25, at 136.

See, e.g., Calleros, Traditional, supra note 26, at 110-11; Calleros & Holst, supra note 25, at 154-55. The author respectfully disagrees with the advice of Oates and Enquist, who write e-memos that apply facts to law will likely contain a summary of the “legally significant facts,” the “background facts,” and “any emotionally significant facts.” See Oates & Enquist, supra note 25, at 226.

See, e.g., Coughlin, Rocklin & Patrick, supra note 25, at 321 (remarking the “optimal length for an email is one screen”); Schiess, How to Write, supra note 27; see also Calleros, Traditional, supra note 26, at 105 (stating a memo should be “no more than one or two single-spaced pages”); Calleros & Holst, supra note 25, at 121 (stating e-memos are preferred when an attorney can provide a “complete analysis in 1-3 pages of text in a streamlined format”); Schultz & Sirico, supra note 6, at 224 (“Most likely, the email will equal a printed page or two, at most.”); Soper, Lockwoody, Clary & Lysaght, supra note 25, at 113 (“[A] one screen rule is likely too limiting”; a writer should aim for “three or four paragraphs.”).